One would think that with the rising quit rate and the high cost of turnover (both in terms of actual and hidden costs) organizations would be making their hiring decisions based on hard data. And yet time and again the evidence1 suggests that hiring managers “go with their gut” – that is, allow non-job-related factors (e.g., cognitive biases such as halo) to influence their selection decisions.

There is a way to address this concern and that is through the collection of objective data on candidate performance. First I will give a topline explanation of how this works and then follow up with a simple example to bring it to life.

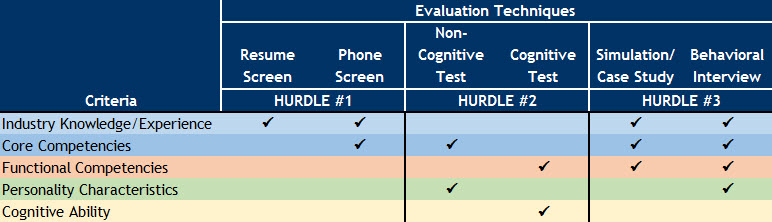

For those of you following along I have recreated a matrix from the May blog and added a row for “hurdles”, which act as gates that help reduce the size of the pool while also increasing candidate quality.

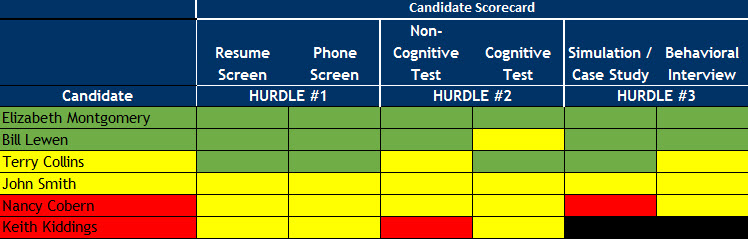

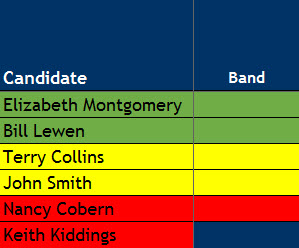

To show how this works at a high level, I have replaced the criteria column with candidate names in the graphic below:

As part of the validation study, green, yellow, and red (i.e., high, medium, and low, respectively) bands have been identified for each test within each hurdle. Scores are then standardized and averaged across tests; candidates whose average scores fall in the green and yellow bands move on to the next hurdle.

As you can see above, Elizabeth and Bill would be considered the top candidates for the job followed by Terry and John; Nancy and Keith would not be considered viable candidates with the latter not making it past Hurdle #2.

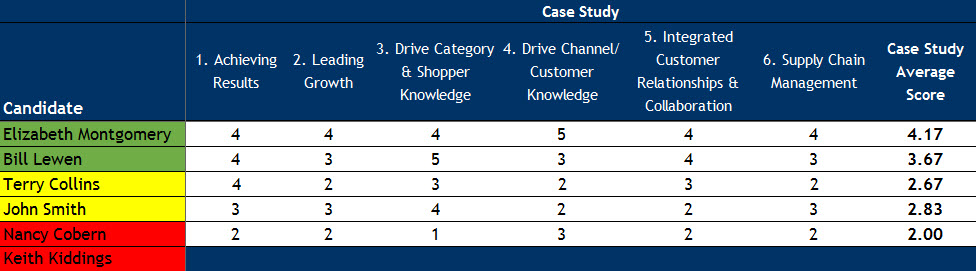

To show how this works, let’s consider Hurdle #3 in which candidates are evaluated using a case study and a behavioral interview. For simplicity, interviewers use a 5-point rating scale (e.g., 5=Exceeds Expectations, 3=Meets Expectations, 1=Below Expectations) to assess performance on both tools where bands have been identified below:

- Green: >= 3.75

- Yellow: >= 2.50, < 3.75

- Red: < 2.50

Here are sample scores for the case study:

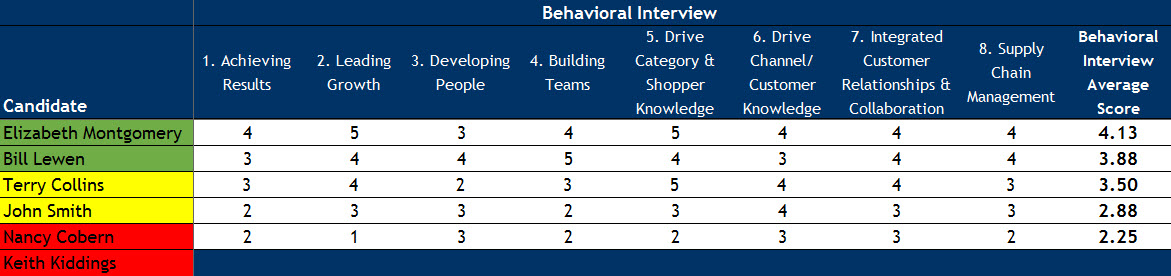

Followed by sample scores for the behavioral interview:

Two things to note in the above graphics. First, Keith did not pass Hurdle #2 and therefore has no scores for Hurdle #3. Second, some of the same competencies are assessed in both tools but more competencies are measured in the behavioral interview than in the case study.

Test scores are then averaged (i.e., mean of the mean) to identify final banding information shown to the left.

There are a few things I encourage you to consider when implementing a similar process for your own organization.

- I recommend not displaying (or making decisions on) the actual final scores (for this reason they are not displayed above). Given that tests inherently contain error, it is reasonable to assume that score differences within band are meaningless. In the example above I have created bands based on the rating scale the interviewers used. Certainly with other types of testing (e.g., personality or cognitive ability tests), there are other means of calculating bands (e.g., standard error of the difference; for more details see any number of books by Robert Guion).

- Making selection decisions based on hard data implies that you should not hire a candidate in a way that contradicts your methodology, e.g., offering Terry or John the position over Elizabeth or Bill. Such a decision would call into question (from both practical and legal standpoints) the entire assessment process. Thus, hire from within the green band first, followed by the yellow band.

- When there are more candidates than there are positions final selection decisions must be made based on job-related criteria. For example, the hiring manager might select Elizabeth over Bill based on her extensive industry-specific experience. To maximize a company’s legal defensibility, these criteria should be identified and documented a priori.

- Many organizations use competency-level weighting to help shape the candidate pool. In the example above, I have assigned equal weighting to each competency; however, weights can be used to reflect an organization’s values or a job’s priorities. In my example, the hiring manager could assign additional weight to the Leading Growth and Developing People competencies as a way to reward (penalize) candidates with highly (poorly) developed leadership skills.

- Finally, having hard data helps inform candidate transitions: candidates hired from the yellow band will have unique onboarding needs vs. those hired from the green band. In both cases I recommend creating a customized onboarding plan that leverages strengths and enhances opportunities. I will be discussing this in more detail in the fall.

In next month’s blog, I will describe the challenges and benefits of evaluating a company’s talent selection program on an ongoing basis. As always, I encourage you to leave your comments and questions in the text box below and to participate in a 3-minute anonymous survey – both of which will help drive discussion and inform subsequent blog posts.

To discuss your specific talent selection issues and challenges, please contact me at 203-817-7522.

[1] Selection Forecast 1999, 2005, 2012